Last month, journalists at the New York Times began collecting images from a publicly accessible camera on the roof of a restaurant in Midtown Manhattan. After gathering one day’s footage, the team employed Amazon Rekognition, an online service that uses artificial intelligence (AI) to match faces from different images, to see if they could put names to the 2,750 pictures they had collected. To the concern of local workers, they found that it worked, even from a partial image or a steep angle. For around $60 (£46), the system was able to identify large numbers of people without their knowledge or consent .

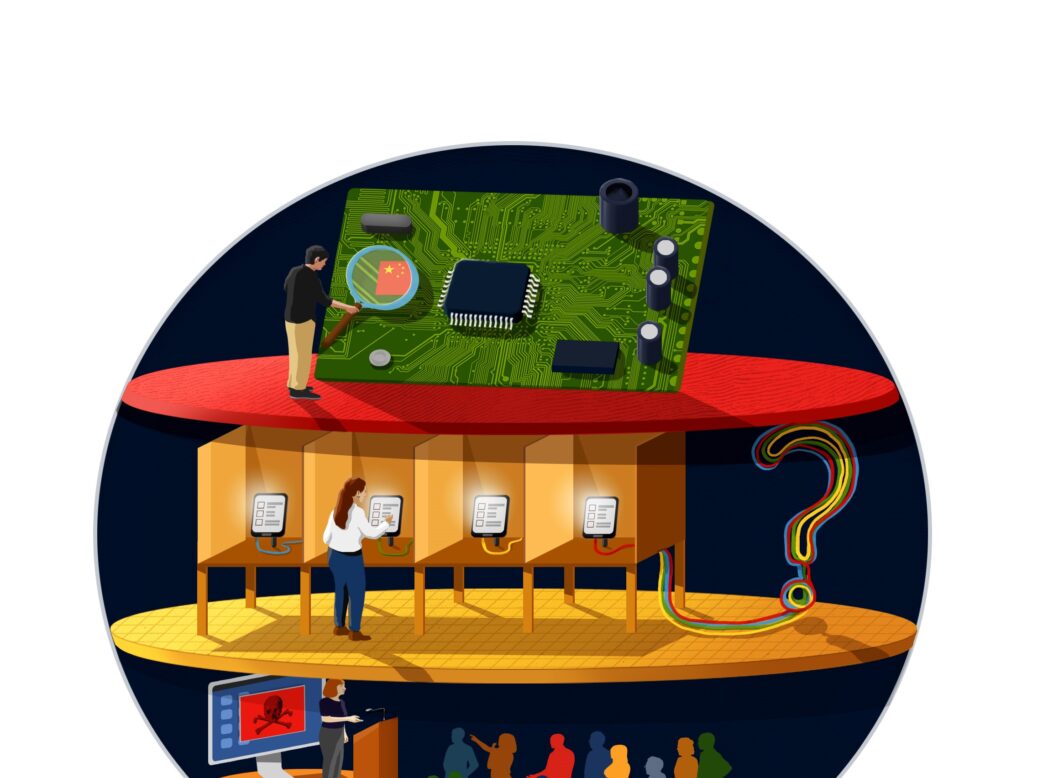

Most governments and police forces have yet to use this technology. But for the 22 million people living in Xinjiang, west China, it is already a reality of everyday life. In order to monitor the movements of the region’s Uighur Muslim population, the Chinese government has built one of the most sophisticated surveillance systems in the world, using artificial intelligence not only to identify individuals but also for ethnic profiling. China is now exporting its surveillance technology abroad.

For policymakers, this is the reality of the threat that AI poses. This technology is no longer a Hollywood script idea; it is already cheaply and widely available. And as we have seen with other technology used to invade citizens’ privacy, the capabilities it offers will be soon useful to criminals, too. There are hundreds of ways in which AI and the automatic identification it offers could be used to impersonate, to defraud, to blackmail and to steal. Machine learning is already being used to make life-changing decisions, from arrests to mortgage offers, in ways that are too often impossible to explain. Crime is a question of agency; when decisions are made inexplicably, by code, it will become very difficult to say who is responsible.

The European Union has taken a first step towards addressing this issue with a list of unenforced guidelines on ethical AI. But Europe’s AI sector pales in comparison to those in China, and its most promising startups tend to be snapped up by foreign tech giants, many of which are themselves investing heavily in controlling the AI ethics debate. Britain, Europe and perhaps even the US are likely to fall behind as less regulated regimes scramble to capitalise on AI – but they may yet lead the way in making it safer for their citizens.

To access the full Spotlight supplement, click here.