Artificial intelligence is reshaping how we work, create, and live. In the years ahead, its impact will only deepen, revolutionising everything from the public services delivered by government to the creation of our favourite content. As the AI revolution accelerates, the stakes have never been higher. The decisions that governments make today will either secure a nation’s standing in the global AI race, or hobble it.

The UK is in a formidable position to take full advantage, boasting world-class research institutions, a vibrant innovation ecosystem, and access to global capital. Britain also has the potential to make targeted investments in digital skills to support future generations and strengthen the country’s ability to scale its AI capabilities and drive growth.

The AI Opportunities Action Plan and recent remarks from the Prime Minister make clear that the government understands the UK’s opportunity to become a global AI leader and position the British economy for decades of future growth.

At Adobe, we understand how important it is to invest in the future and find successful ways to bring everyone along on that journey. When it came to building Adobe Firefly, our family of commercially-safe and IP-friendly generative AI models, we did so with transparency, responsibility and user control at the forefront. Our approach to AI is rooted in respect for creators and guided by the belief that creativity is a uniquely human trait – and that generative AI has the power to assist and amplify human ingenuity, not replace it.

This approach can also be applied to public policy. With countries around the world racing to lead in AI, it has become clear that, for the UK to succeed in adoption and readiness, it must establish a policy framework that encourages innovation and also sets up creators for future success. The question now, is: which regulatory components will have the most impact – and how can the UK strike the right balance?

The UK’s creative sector is a global powerhouse, contributing £124bn in gross value added to the UK economy in 2024. With more than 16 million Brits expected to contribute to the creator economy by 2027, it’s clear just how critical it is to craft AI policies that encourage the creative landscape to thrive.

Generative AI is revolutionising and amplifying human creativity. When used responsibly, AI can eliminate tedious tasks and help creators focus on what they do best: be creative. We are already seeing how AI is transforming the creative process, and it’s likely that the next generation of creatives will be using AI in some way for their work. At the same time, we recognise the concerns voiced by the creative community: style imitation, copyright infringement, and the loss of control over their work. These challenges are global but are particularly pertinent in the UK, given the scale and significance of the creative economy.

Questions remain about the use of copyrighted data to train AI models. While views differ, we welcome the UK government’s recent consultation and commitment to solving key policy challenges rather than waiting for the outcome of court cases in years to come. Progress is possible through joint action by government and industry to protect and empower creators.

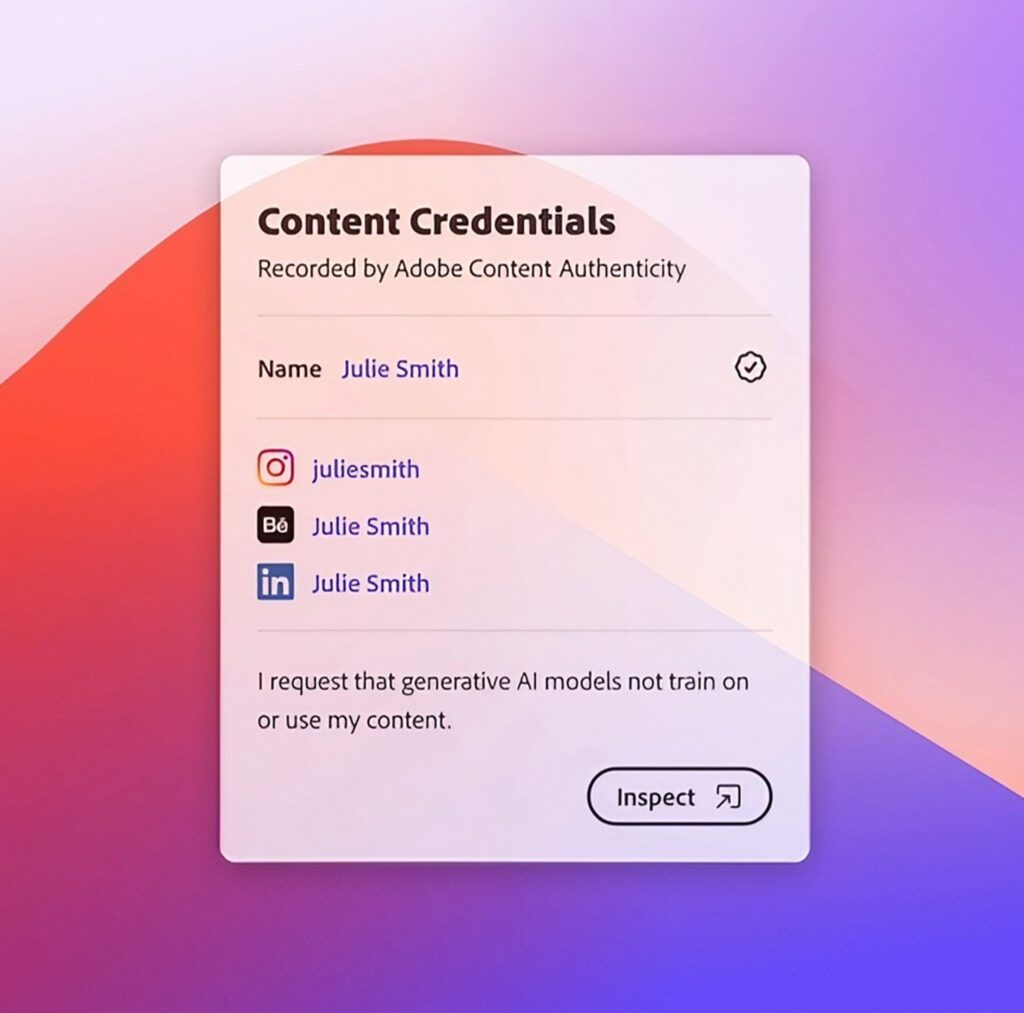

Adobe has long championed the work of the Coalition for Content Provenance and Authenticity. Adobe’s Content Credentials provide a type of “nutrition label” for digital content which details the origins of a design, including who created it, when, and any edits made along the way.

Content Credentials offer creators a trusted way to disclose their creative process and show the provenance of their content. The technology has been integrated into Adobe Content Authenticity, a free web application that enables creators to easily apply these credentials to their work. But this app goes even further, allowing creators to signal that their content should not be used to train generative AI models. The result: the prospect of agency over the input.

As it considers the responses to its own copyright and AI consultation, Adobe is calling on the UK government to support industry-wide adoption of solutions like Content Credentials that can signal to AI developers every creator’s preference on training on their content.

This approach aligns with recent developments in the EU, where the Code of Practice for General-Purpose AI now requires AI developers to identify and comply with machine-readable reservations of rights, demonstrating how regulatory frameworks are increasingly recognising creators’ opt-out preferences.

While a lot of focus has been understandably placed on the content that goes into developing AI models – the input – there are also measures that should be considered to protect creators and consumers with respect to what comes out of AI models – the output.

A creator’s unique artistic style is their signature and core to their livelihood. Yet AI can be misused to replicate these styles without consent, enabling bad actors to compete directly with artists by using AI generated imitations. Adobe has been advocating for the establishment of new anti-impersonation rights to provide creators with a way to protect themselves against those that are intentionally and commercially impersonating their work through the misuse of AI tools.

The US is also moving in this direction through legislation like the Preventing Abuse of Digital Replicas Act, which was introduced in the House of Representatives and aims to protect creators against unauthorised digital reproductions. We believe the UK can play a leading role here in granting creators a right of action against those bad actors misusing AI to imitate their style, as it considers a framework for AI innovation and creator protections.

Like any groundbreaking new technology, generative AI can be used for bad as well as good. In recent years, bad actors have exploited AI to generate misleading or deceptive content, often targeting public figures and politicians.

Democratic societies, like Britain, with mature media and information ecosystems, face heightened risks, especially given that trusted institutions such as the BBC have a global reach.

The good news is that solutions do exist. This is where Content Credentials can again be critical. In an era of deepfakes and synthetic media, authenticity matters more than ever. Content Credentials offer a practical solution by giving digital content greater context. Looking ahead, we believe Content Credentials could also lay the foundations for future licensing frameworks, simplifying attribution, rights protection, and monetisation.

Adobe is urging the UK government to champion Content Credentials as an industry standard. It can also lead by example by applying Content Credentials on official government content, helping to build trust and offering citizens a way to verify the authenticity of digital communications, alongside a comprehensive anti-impersonation right of action – protecting creatives against those misusing AI tools.

AI is moving fast, and the UK has both the ambition and the foundations to lead. But leadership demands action. This means clear, future-proof regulation, industry-wide adoption of responsible tools, and a sustained commitment to protecting creators. The decisions made now will shape industries of the future. By working together, government and industry can ensure the UK serves as a global leader in AI for decades to come.