Mythos and the insecurity avalanche

Apocalyptic warnings about Anthropic’s Mythos model boost its proprietor’s image

By

Reviewing politics

and culture since 1913

Apocalyptic warnings about Anthropic’s Mythos model boost its proprietor’s image

By

Abba Voyage’s hologram performance was an astounding success. But tribute artists aren’t worried yet

By

Donald Trump gambled on Iran – so did I

By

How AI captured Westminster

By

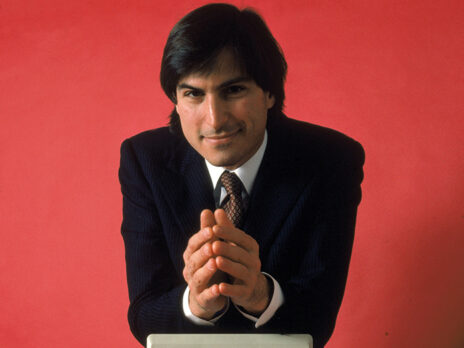

How the electronics company went from near bankruptcy to global dominance – and changed our lives along the way

By

Called before MPs, tech execs display their constant vigilance against threats

By

AI doesn’t look set to replace the sommeliers anytime soon

By

A love letter to the internet that once was

By